Creating more complex surveys

We’ll walk you through creating multi-page surveys and using logic to take respondents to different parts of a survey based on their answers.

Class 4: Multipage Surveys and Logic

As you become more confident in using online surveys you may want to start doing surveys that are longer and more in-depth.

While the general principle of trying to keep an online survey as short as possible should always be borne in mind, with the right audience and the right questions, you can keep respondents engaged through longer surveys. Over time, you’ll probably be able to determine the sweet spot for your surveys through experience, and by analysing your survey’s partial responses you’ll be able to see how far through the survey respondents who did not complete the survey got before deciding to abandon it.

To do this, and for other reasons as well which we’ll go into later in the article, it’s a good idea to break up your survey into multiple pages. How many questions should go on each page is a matter of taste and judgement as you need to balance the factors of having each page be reasonably short and sweet, but not overloading or irritating the respondent with lots of extra clicks, slowing down their progress.

Inserting the Page Breaks

You may remember that that in an earlier article we said that 3 questions per minute was a decent rule of thumb for assessing the length of a survey. This can also be used as a basis for breaking up your survey into pages. If we aim to have our respondents spend around a minute on each page, that shouldn’t feel like there’s too many clicks involved. Once you start getting a feel for things you can start thinking in more detail about the questions on each page and taking note of their complexity. A comment box, or a matrix with multiple rows, columns, and options, is likely to take longer than a simple yes/no.

Once a survey is broken up into pages, this opens up other new possibilities. You can randomise the order that pages (or a selection of pages) are shown to the respondent. This is usually done in order to reduce a bias effect known as “priming”. Priming is when a respondent’s reaction to one question affects their response to a question that follows it in the survey. By randomising the order of the pages in the survey (and the order of each question on a page), then this effect can be reduced. Of course, this feature should be used with care, and is not compatible with other features such as Skip Logic.

Adding Logic

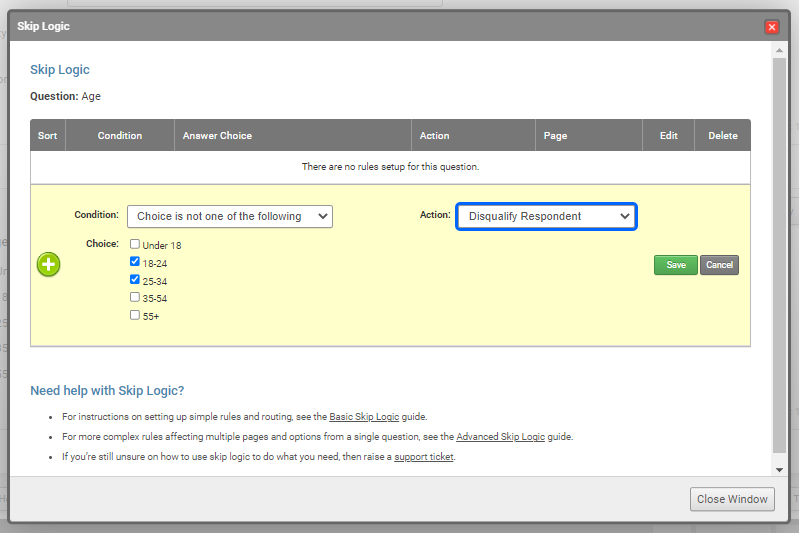

This brings us neatly on to skip logic itself. This is a feature, sometimes referred to as “branching”, where code is added to the survey in the form of rules that apply to specific questions. The rules direct the respondent to pages based on their answers. Taking them to pages with questions we want them to answer, or away from ones that we don’t, or are irrelevant.

This isn’t really the forum to get into deep detail about the setting up complex skip logic, we have the knowledgebase for that. Instead, we want to focus on what you can and can’t set up, and the approaches you should take to deciding whether you want to implement it for a survey.

A very common use is in market research surveys, where researchers want to target respondents based on demographic or lifestyle criteria and logic is set up so respondents who don’t meet those criteria are disqualified from the survey.

Example of the Benefits of Using Logic

To start with, here is an example of a transport survey. This is the kind of thing that might be sent out by a company HR department to get employee feedback on commuting habits.

Try survey without skip logic (opens in new window)

You may note that there are several questions on the survey that won’t be relevant to all respondents. The questions about parking will not be relevant to people who always use public transport, for instance. So, we have also created a version of this survey using Skip Logic.

Try survey with skip logic (opens in new window)

You’ll note that, depending on your answers, you don’t see certain pages. If you want to look at the rules in this survey in more detail, then have at a look at this page on the website. That’s a simple example but much more complex things can be set up.

More Examples from our Clients:

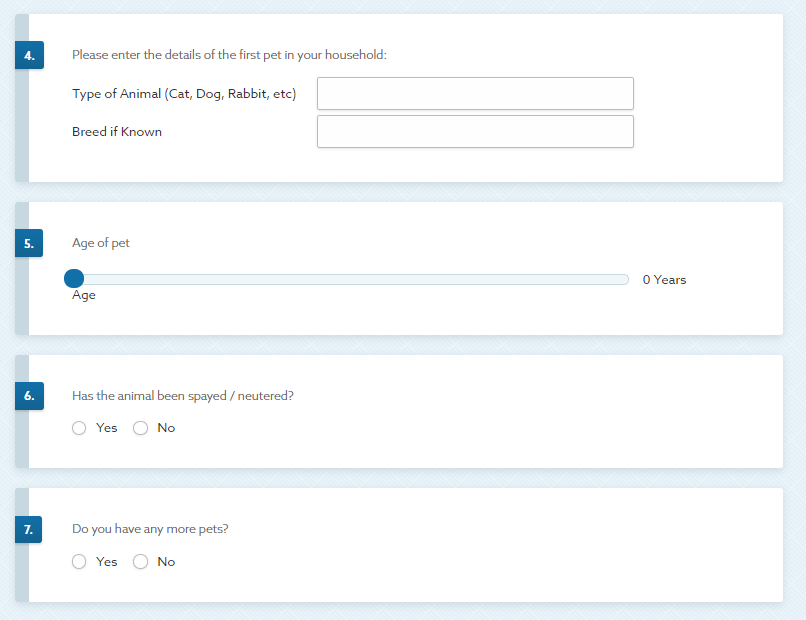

A Census-Style survey for pets that asks for the details of the owner, then those of one pet, then at the end of the form asks if the owner has any more pets. If they say “no”, they are taken to the end of the survey, and if they say “yes”, they are taken to a page for a new pet, and this repeats up to ten times.

A long survey for a public sector organisation that included questions that were common to all respondents and some that were only appropriate for respondents who worked in particular departments. Skip logic was set up to ensure that respondents were skipped past inappropriate content and questions.

If you have further questions about dealing with “right to be forgotten” or methods of collecting respondent consent on surveys please take a look at our help guides:

- Using SmartSurvey to Collect GDPR Consent

- Enabling Anonymous Responses

- Removing Personal Data from Surveys

Moving forward

The capabilities of skip logic are enormous, and in later guides, we’ll talk about how it can be combined with other features to achieve more complex results. In our next article, though, we’re going to discuss contact lists and email distribution.